Accurate geocoding is critical for businesses like logistics and insurance, where errors can lead to millions of euros in losses annually. Incorrectly mapped addresses can cause delivery delays, misplaced shipments, or flawed risk assessments. To ensure accuracy, six factors play a key role:

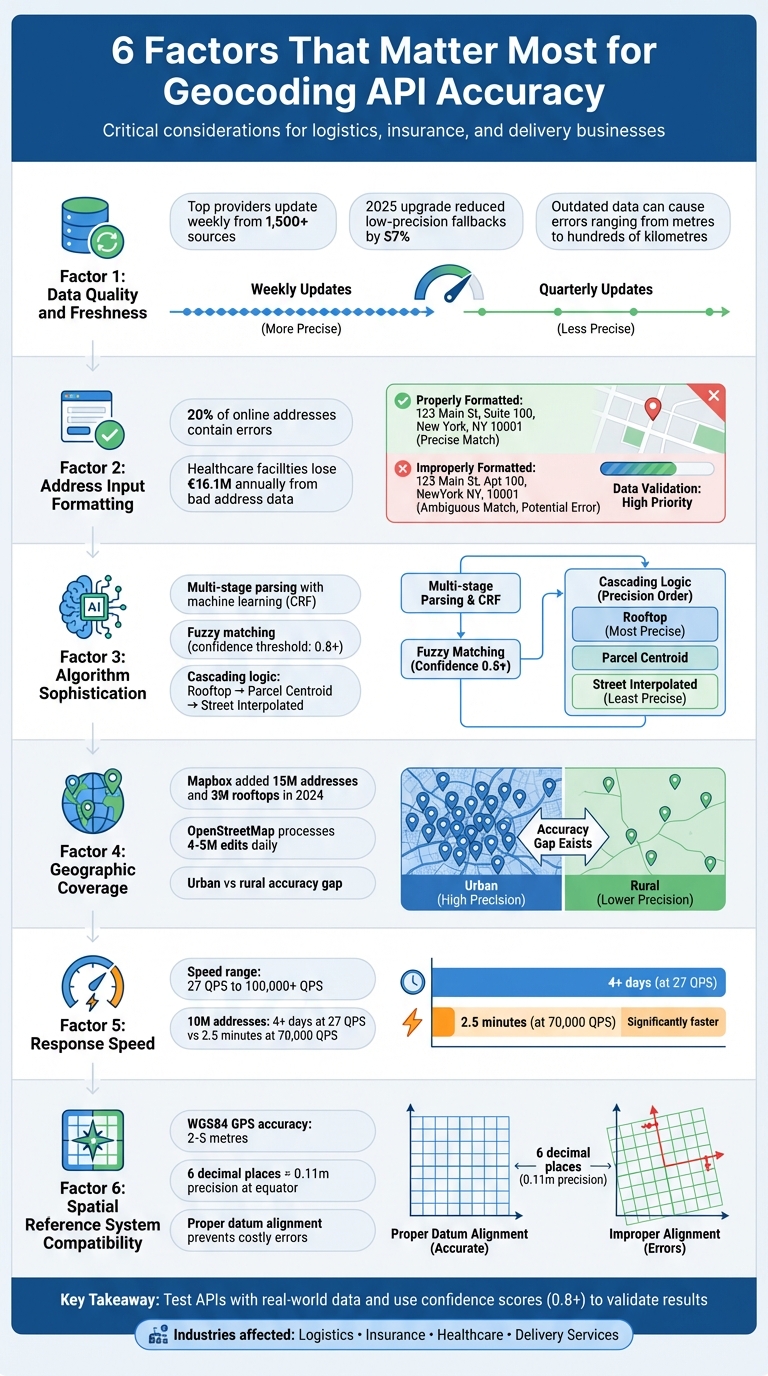

- Data Quality and Freshness: Frequent updates and cross-checking multiple sources reduce errors.

- Address Input Formatting: Clean, structured inputs prevent inaccuracies caused by typos or missing details.

- Algorithm Sophistication: Advanced parsing and fuzzy matching improve results, even with messy input.

- Geographic Coverage: Broad and detailed datasets handle urban and rural areas effectively.

- Response Speed: High processing speeds are essential for real-time applications like delivery tracking.

- Spatial Reference System Compatibility: Proper alignment of coordinate systems ensures precise mapping.

Key takeaway: Choose a geocoding API that aligns with your specific needs - whether it’s rooftop-level precision for logistics or handling high-volume batch processing. Test APIs with real-world data to identify strengths and weaknesses.

6 Critical Factors That Determine Geocoding API Accuracy

1. Data Quality and Freshness

At the heart of any geocoding API lies the accuracy and timeliness of its data. Addresses evolve - postal codes are updated, streets get renamed, and older locations are retired. When a geocoding service relies on outdated data, it can lead to false positives, where an address is incorrectly pinned, sometimes just metres away, but other times hundreds of kilometres off the mark.

Outdated or poor-quality data amplifies the risk of such errors. For businesses in logistics or insurance underwriting, these inaccuracies can result in losses amounting to millions of euros annually. The problem becomes even more pronounced in rapidly growing areas, where new streets and buildings are added faster than some databases can keep up.

Frequent updates are critical. Top-tier providers refresh their data weekly, pulling from over 1,500 sources, including government records, commercial datasets, and verified crowd-sourced inputs. This ensures new addresses and administrative changes are quickly incorporated. On the other hand, providers that update quarterly - or less frequently - risk missing crucial updates, leading to broader, less precise results, such as city- or postcode-level matches instead of rooftop accuracy. A 2025 upgrade to one geocoding engine reduced low-precision fallbacks by 57% through better data freshness and cross-verification.

What sets premium providers apart is their robust verification process. These services don’t just compile data - they cross-check multiple sources and employ AI to detect anomalies before integrating updates. This layered approach ensures inconsistencies are flagged and resolved, preventing errors in the final coordinates. When assessing a geocoding API, it’s worth investigating how often its data is refreshed and what measures are in place to verify accuracy. Such scrutiny is essential to ensure reliable outcomes.

To further safeguard operations, it’s wise to never rely solely on geocoding results without validation. Quality APIs provide confidence scores and match codes, which can be used to set thresholds for automated decisions versus manual reviews. For instance, an address with a low confidence score should prompt human intervention before finalising a delivery route or calculating an insurance premium.

sbb-itb-823d7e3

2. Address Input Formatting and Completeness

Even the best geocoding API can't fix issues caused by poorly formatted or incomplete address data. Simply put, if the input data is flawed, the output will be too. As Davin Perkins from Smarty aptly states:

"If you put garbage in, you're going to get garbage out, even when it comes to geocoding!"

This becomes even more critical when you consider that roughly 20% of addresses entered online contain errors. These mistakes often stem from typos or "fat finger" errors on mobile devices. To avoid such issues, sticking to strict formatting rules is a must.

Missing address components can create confusion and reduce accuracy. For instance, if a city or state name is left out, the API might struggle to differentiate between towns with the same street names. Similarly, while details like "Floor", "Suite", or "Apt" can sometimes be omitted, they are essential when pinpointing a specific building.

Why Proper Formatting Matters

- Postal Codes: Always treat postal codes as strings, not numbers, to avoid losing leading zeros (e.g., "02142" becoming "2142").

- Country Names: Use full country names to avoid confusion. For example, "CA" could mean Canada or California, while "DE" could refer to Germany or Delaware.

When dealing with incomplete data, geocoding APIs often fall back on cascading match logic. They start with rooftop-level accuracy and, if needed, move to parcel centroids, street interpolations, or even city centroids as the data becomes less specific. To improve precision, structured inputs are key. Use dedicated parameters like country, postal_code, or administrative_area rather than cramming everything into a single unformatted string .

The Cost of Address Errors

Address mistakes aren’t just inconvenient - they can be expensive. In healthcare, for example, patient misidentification caused by bad address data costs the average facility €16.1 million annually due to lost revenue and denied claims. For logistics and delivery, these errors lead to failed deliveries, wasted fuel, and unhappy customers. Ultimately, precise geocoding relies on clean, well-formatted data, making attention to detail in address entry essential for avoiding costly mistakes.

3. Geocoding Algorithm Sophistication

Every accurate geocoding result relies on a complex algorithm working behind the scenes. These algorithms do more than just match addresses - they analyze, interpret, and validate data, even when the input is less than perfect. Understanding their inner workings can help you select the right API and configure it effectively.

Breaking Down Input with Multi-Stage Parsing and Fuzzy Matching

Modern geocoding algorithms begin by breaking raw input into smaller components like house numbers, street names, and postal codes. This process is powered by advanced machine learning tools, such as Conditional Random Fields (CRF), which handle messy or unstructured inputs that don't follow standard rules. These algorithms rely on strong data foundations and well-defined input standards to achieve high accuracy.

Once the input is parsed, fuzzy matching techniques come into play to handle typos and variations. Methods like Levenshtein distance, Jaro-Winkler, and N-gram similarity are used to correct errors at different levels. However, these techniques are calibrated with a strict confidence threshold - typically 0.8 or higher - to minimize ambiguity.

Fuzzy matching is a powerful tool but requires careful calibration. As Korem notes:

"Fuzzy matching can be a double‐edged sword. If the geocoder is too ambiguous, it might return false‐positive results by modifying too many components".

Without precise settings, the risk of false positives increases significantly. A confidence threshold of 0.8 or above ensures a good balance between correcting errors and avoiding incorrect matches.

Cascading Logic and Address Context

When the input data is unclear, advanced algorithms employ a "waterfall" or cascading logic approach. They first try to find a "Rooftop" match, which pinpoints an exact building. If that fails, they move to a "Parcel Centroid" match, and then to a "Street Interpolated" match. This tiered system demonstrates the algorithm's ability to manage uncertainty beyond simple formatting issues.

Additionally, these algorithms interpret address components based on their position and regional conventions. For instance, house numbers come before street names in the US, but the reverse is true in Germany. Developers can further reduce ambiguity by including optional parameters like bounds, countrycode, or proximity to focus results on a specific area.

Modern geocoders combine rule-based logic, machine learning parsing, and fuzzy reconciliation to produce accurate results. This multi-layered approach turns unreliable input into precise coordinates, complementing the importance of data quality and proper input formatting discussed earlier.

4. Geographic Coverage and Data Sources

For geocoding to achieve high levels of accuracy, it’s not just about refined algorithms or updated data - it’s also about how broad and detailed the geographic coverage is. The more comprehensive the coverage, the better geocoding systems can perform across different regions. However, no single dataset can perfectly map out addresses worldwide. Addressing systems vary widely - not just between countries but even within regions. What works well in Berlin might struggle in rural Brandenburg, and this pattern holds true globally.

Urban Precision vs. Rural Drift

The gap between urban and rural geocoding accuracy is clear. Urban areas often produce highly accurate "rooftop" matches, thanks to well-maintained street address data and frequent updates. Rural areas, on the other hand, can be trickier. Here, geocoding results might rely on parcel centroids or street interpolation, which are less precise. A real-world example: In December 2024, Saucey, an on-demand delivery service, slashed delivery times by 15% after adopting the Mapbox Geocoding API. This improvement came from providing drivers with pinpointed locations rather than vague approximations.

The challenge grows with larger parcels. As Davin Perkins from Smarty points out:

"Knowing the precise location of the primary structure on a parcel can make a huge difference... Even being off by just a dozen metres could seriously skew the results".

To uncover these limitations, test your API with non-standard addresses like farms or irregularly shaped plots. The contrast between urban and rural accuracy underscores the importance of using multiple, region-specific data sources.

Why Multiple Data Sources Matter

Relying on more than one dataset is key to improving geocoding reliability in both urban and rural areas. APIs that combine data from government records, commercial databases, satellite imagery, and crowd-sourced platforms consistently deliver better results than those tied to a single source. OpenStreetMap, for instance, processes between 4 million and 5 million edits daily, showing how quickly location data evolves. In 2024, Mapbox expanded its global reach by adding 15 million new addresses and 3 million rooftops, updating data across 83 countries, including regions like Egypt and the Isle of Man.

Regional quirks further complicate geocoding. Take Canada, for example, where "Ste" might mean "Suite" in English or "Saint" in French. Without context-aware systems, such ambiguities can lead to errors. Choosing an API that offers match types and confidence scores can help users differentiate between highly accurate rooftop matches and less dependable city-centre centroids.

5. Response Speed and Processing Capacity

Speed plays a critical role in real-time geocoding applications, such as checkout flows or delivery tracking. If an API struggles to keep up with demand or processes requests too slowly, the results can suffer. The Radar Team emphasizes this point:

"A single bad coordinate can mean a missed delivery, a false fraud flag, or a broken customer experience".

To put things into perspective, processing speeds can range from 27 QPS (queries per second) to over 100,000 QPS. At the lower end, geocoding 10 million addresses would take over four days, while at 70,000 QPS, the same task is completed in under 2.5 minutes. For businesses handling hundreds of millions of addresses each month - like those conducting regular risk assessments - this difference isn't just about speed; it directly affects how efficiently operations run. While raw processing speed is the foundation, managing high-volume, real-time requests requires additional fine-tuning.

Real-time applications face unique hurdles with high latency, especially when processing incomplete or ambiguous user input. One effective solution is using a Place Autocomplete service to guide users toward valid addresses before geocoding begins, which helps reduce errors. Standard geocoding APIs often struggle in these scenarios, making robust server capacity just as important as speed.

When infrastructure falls short, systems may slow down or force requests into queues. Cloud-based providers offer an advantage here, as they can quickly scale server capacity to manage traffic spikes, unlike systems with limited infrastructure that can create bottlenecks. During large-scale operations - like bulk data imports or seasonal surges - performance can shift dramatically. The Radar Team highlights this challenge:

"If you blast a provider with millions of near duplicate requests during an ETL job, you will find rate limits and long tail latencies you never saw during development".

Proper server capacity does more than just speed things up - it ensures accuracy even under heavy demand. To maintain reliable performance during high loads, moving geocoding operations to the backend can offer better control over API performance. This approach allows businesses to manage API keys, quotas, and retries more effectively. It also supports deduplication and caching, which help cut down on latency and reduce overall costs.

6. Spatial Reference System Compatibility

When dealing with geospatial data, ensuring the compatibility of spatial reference systems is a critical step. It bridges the gap between raw coordinate data and accurate mapping, enabling precise geospatial insights.

A Spatial Reference System (SRS), also known as a Coordinate Reference System (CRS), provides the mathematical framework to locate points on Earth. It incorporates components like an ellipsoid, datum, projection, and measurement units. Without proper alignment, even correctly formatted addresses can end up misplaced.

For instance, Geographic Coordinate Systems (GCS) use angular units like degrees, which are well-suited for GPS applications. In contrast, Projected Coordinate Systems (PCS) map the Earth onto a flat plane using linear units, making them ideal for calculating distances and areas accurately. Mixing systems - such as WGS84 and NAD83 - without proper conversion can lead to discrepancies. As Smarty highlights:

"Mixing data from WGS84, UTM, or NAD83 without proper conversion can cause points to appear in the wrong place, leading to misaligned maps, inaccurate analysis, or even costly errors in navigation".

Different datums define "zero" differently, which affects positional accuracy. Modern GPS devices using WGS 84 can achieve location accuracy within 2–5 metres under optimal conditions. Recent updates to WGS 84 have further refined its positional accuracy relative to the Earth's crust to within centimetres. The Universal Transverse Mercator (UTM) system, for example, divides the globe into 60 vertical zones, each 6° of longitude wide, to reduce local distortion.

To maintain universal compatibility, it’s advisable to store raw data in WGS84 (EPSG:4326) and convert it to a projected system like UTM for calculations requiring precision. Always document EPSG codes in metadata for clarity. Ensure incoming coordinates are valid by verifying that latitude values fall between -90 and 90, and longitude between -180 and 180. For most geocoding tasks, six decimal places in decimal degrees provide sufficient precision - equivalent to about 0.11 metres at the equator.

Pay close attention to coordinate order. While GeoJSON uses the format [longitude, latitude], many older systems follow [latitude, longitude]. Reversing these can cause significant errors. In spatial databases like PostGIS or MySQL, tagging geometries with a Spatial Reference System Identifier (SRID) ensures proper interpretation of coordinates. This simple precaution prevents costly misalignments when integrating datasets from various sources.

Careful management of spatial reference systems ensures that all previous efforts to enhance accuracy translate seamlessly into dependable, real-world mapping.

Conclusion

Choosing the right geocoding API requires a careful balance of six key factors. While data quality is essential, it's ineffective if the algorithm struggles with messy input or if geographic coverage is too narrow. Similarly, fast response times lose their value if coordinates are inaccurate, and even precise coordinates fall short when spatial reference systems don’t align properly.

These factors - data freshness, algorithmic accuracy, geographic range, processing speed, and spatial compatibility - are critical for effective geocoding. As Korem succinctly puts it:

"Not all geocoders are created equal and what can seem like minor details can become costly mistakes, especially when you rely on the geocoding results for your business processes".

Errors like input mistakes or false positives can have serious consequences for industries like logistics, insurance, and customer service.

Your choice should align with your specific needs. For logistics, rooftop-level precision and low latency are essential for tasks like real-time routing. Social apps, on the other hand, may only need neighbourhood-level accuracy with wide geographic coverage. If your data comes from users and includes typos, opt for APIs with strong fuzzy matching and input validation. For backend batch processing, prioritise throughput and data storage rights over response speed.

Always test APIs with real-world data samples. Use incomplete entries and common abbreviations to see how well the system performs. Verify that "rooftop accuracy" actually corresponds to building footprints. Additionally, review storage policies - some APIs limit coordinate caching to 30 days, which can lead to repeated calls and higher costs.

For critical applications, consider a multi-vendor strategy. Use a cost-effective provider for standard queries and reserve premium services for low-confidence results or complex cases. Confidence scores can help; flag results below 0.8 for manual review. As the Radar team advises:

"If you treat geocoding results as exact truth, you will build brittle logic. Instead, treat them as probabilistic and design around ranges and confidence".

FAQs

How can I measure geocoding accuracy with my own address data?

To check how accurate your geocoding results are, start by comparing the geocoded coordinates of your addresses to verified or known locations. One effective way to measure this is by calculating the distance between the geocoded points and the actual locations, often using methods like the Haversine distance.

You can also look at the confidence scores provided by the geocoding API. These scores give you an idea of how reliable the results might be. For a more thorough check, manually validate a sample of the results by cross-referencing them on a map or against trusted data sources. This step can help you spot any inconsistencies or errors.

What confidence score threshold should I use for automated decisions?

A confidence score nearing 1.0 is what you want for highly accurate automated decisions. That said, the exact threshold you should aim for really depends on your application's tolerance for errors and how the specific geocoding API scores results. To strike the right balance between precision and adaptability, tweak the threshold according to your particular needs.

How do I avoid CRS/SRID mix-ups (e.g., EPSG:4326) in my pipeline?

To steer clear of CRS/SRID mix-ups, such as confusion with EPSG:4326, it's crucial to handle coordinate reference systems with care and precision. Start by standardising the CRS right at the input stage to ensure consistency. Use trusted geospatial libraries to manage transformations accurately, as they help minimise errors during conversions. Additionally, always validate the CRS of incoming data before processing it to confirm it aligns with your expectations.

Equally important is to document all CRS assumptions thoroughly. This ensures clarity and allows your team to stay on the same page, reducing the risk of misinterpretation. Clear communication about CRS usage is key to maintaining the integrity of your geospatial data.