When geocoding large datasets, API rate limits can slow you down or even block your requests. This article explains how to avoid these issues while improving efficiency and keeping costs under control. Here’s the gist:

- Throttling: Controls the request rate to avoid errors like HTTP 429 ("Too Many Requests").

- Batching: Groups multiple addresses into single requests to reduce API calls and costs.

- Exponential Backoff: Adds delays between retries to handle temporary errors without overwhelming the server.

- Caching: Reuses previous results to cut redundant API calls and save money.

- Asynchronous Queuing: Processes large datasets in smaller, manageable chunks for scalability.

For example, using these strategies, you can process 250,000 addresses in just over 2.3 hours instead of days, while staying within API limits. Tools like Zip2Geo offer plans starting at €49/month for 100,000 requests, making it easier to manage costs. Combining these techniques ensures smooth workflows, fewer errors, and predictable spending.

Want to know how each method works? Let’s break it down.

1. Request Throttling and Batching

Request throttling and batching are practical strategies to tackle rate limit constraints when working with geocoding APIs. Throttling manages the speed at which your application sends API requests, preventing overloads, while batching combines multiple addresses into a single asynchronous request. Together, these techniques streamline batch geocoding. Throttling helps avoid "429 Too Many Requests" errors by adhering to RPS (requests per second) or QPM (queries per minute) limits. Batching, on the other hand, allows you to process up to 1,000 addresses in one go, cutting down the total number of API calls.

Scalability

Dynamic batching outperforms fixed-interval methods, especially when handling large datasets. Many APIs limit users to 50 RPS and 3,000 QPM. Premium setups can push throughput to around 1,800 addresses per minute, but fixed-interval scheduling can cause synchronization issues when multiple tasks overlap, potentially hitting rate limits. Dynamic queuing avoids these spikes by maintaining a steady flow of requests, staying well below the maximum thresholds. Once scalability is achieved, attention must shift to managing errors effectively.

Error Handling

Optimizing throughput is only half the battle - managing errors is equally important. For rate limit errors like "OVER_QUERY_LIMIT" or HTTP 429 responses, a retry mechanism with fixed attempts (commonly five) and delays of about 1,000 milliseconds can help recover gracefully. For asynchronous batch jobs, monitoring HTTP status codes is essential: a 202 status means the job is still in progress, while 200 indicates completion. Adding jitter to retry intervals helps prevent synchronized spikes in traffic, which could mimic a distributed denial-of-service (DDoS) attack.

Implementation Complexity

Managing the complexity of batch processing is critical for maintaining consistent performance. Fixed batching methods, such as using timers or cron jobs, are straightforward but can lead to burst errors in high-volume situations. Dynamic batching, which often involves asynchronous queues (like Redis) and task managers, offers better control. This approach allows for per-batch retries without stalling the entire process. While it demands more infrastructure and setup, it’s far more reliable when processing millions of records.

Cost Efficiency

In addition to speed and reliability, cost control is a key consideration. Batch geocoding is generally more economical than sending individual requests. For example, processing 100,000 addresses might cost around €300 with traditional methods. However, batch-specific endpoints often come with lower rates, with plans starting at approximately €49 per month for high-volume operations. To avoid unexpected expenses, setting daily caps in your API console can prevent runaway costs caused by automated retries or throttling misconfigurations. This ensures predictable spending and enhances overall efficiency.

sbb-itb-823d7e3

2. Exponential Backoff and Retry Mechanisms

Exponential backoff is a strategy designed to reduce request rates when an API signals overload. Instead of resending requests immediately, it increases the wait time after each failure - doubling the delay with each retry. As Wikipedia puts it:

Exponential backoff is an algorithm that uses feedback to multiplicatively decrease the rate of some process, in order to gradually find an acceptable rate.

This method is particularly effective when server capacity is uncertain, helping systems stabilize naturally at a sustainable request rate.

Scalability

Exponential backoff adapts dynamically to fluctuating loads, making it a scalable solution. For example, when a 429 "Too Many Requests" error occurs, the mechanism increases the delay before the next retry. Adding jitter - randomizing the delay intervals - reduces the risk of the "thundering herd" problem, where multiple clients retry at the same time and overwhelm the server. To keep things manageable, a maximum delay (e.g., 60 seconds) can be applied, ensuring latency remains reasonable while avoiding collisions. This flexibility allows systems to handle varying traffic levels without compromising reliability.

Error Handling

This approach is well-suited for handling transient errors like "OVER_QUERY_LIMIT" or HTTP 429. The gradual delay between retries gives the API time to recover from temporary overloads. AWS Prescriptive Guidance explains:

The retry with backoff pattern improves application stability by transparently retrying operations that fail due to transient errors.

However, not all errors should trigger retries. For instance, permanent issues like "Address not found" indicate a problem that won't resolve with another attempt. It's also critical to avoid exceeding hard limits, such as Google's 3,000 queries per minute cap. Repeated violations may lead to an IP block lasting up to two hours, so pausing requests before hitting these thresholds is essential.

Implementation Complexity

While effective, implementing exponential backoff with added features like jitter and maximum delay introduces complexity. Developers can manage this manually using timers or rely on tools like AWS Step Functions . Libraries such as @geoapify/request-rate-limiter or Geopy's RateLimiter can also handle pacing and retry logic automatically, saving time and effort. Regardless of the method, ensuring idempotency - so that repeated operations don’t cause data corruption - is a must. Combining backoff with throttling and batching further optimizes performance by balancing server load.

Cost Efficiency

Exponential backoff complements throttling and batching by reducing unnecessary API calls. Although most platforms charge only for successful requests, retries during an "OVER_QUERY_LIMIT" state can still drain your quota. Caching offers a proactive way to cut down on redundant calls. For example, Google allows latitude/longitude pairs to be cached for up to 30 days. Additionally, setting daily quota caps in your API console helps prevent unexpected costs from excessive retries or unplanned usage spikes.

3. Caching and Response Reuse

Caching involves storing previously geocoded addresses in memory, allowing your system to reuse results without making repeated API calls. This is especially useful for batch workflows, where duplicate or recurring addresses are common. As Sanborn puts it:

"By storing and reusing coordinates for recently geocoded addresses, you cut redundant calls and improve response times."

OpenCage echoes this sentiment: "The fastest request is the one you don't make." This principle is key to efficient batch processing, as it minimizes unnecessary API requests.

Scalability

Caching can dramatically cut down the number of API requests your system sends by serving results from local storage. This becomes crucial when handling large datasets, helping you stay within rate limits - such as Google's cap of 3,000 queries per minute (about 50 requests per second). For high-demand scenarios, tools like Redis enable distributed caching, allowing multiple application instances to share stored results and avoid redundant lookups across workers.

Error Handling

Caching also acts as a safety net. If the API provider becomes unavailable or rate limits are hit, previously cached results can still be served. This ensures your system remains operational during temporary outages or "OVER_QUERY_LIMIT" errors. However, caching won't solve permanent issues, like an address that cannot be geocoded - those require separate error-handling mechanisms.

Implementation Complexity

Setting up caching involves choosing a storage backend and implementing a cache-invalidation strategy to keep data accurate. For example, Google Maps Platform only allows cached latitude and longitude values to be stored for 30 calendar days, after which they must be deleted. According to their Terms of Service:

"Customer can temporarily cache latitude (lat) and longitude (lng) values from the Geocoding API for up to 30 consecutive calendar days, after which Customer must delete the cached latitude and longitude values."

On the other hand, providers using open data often allow unrestricted storage and redistribution, making implementation simpler. Automating cache expiration with Time-to-Live (TTL) settings can help maintain compliance effortlessly.

Cost Efficiency

Caching can significantly lower API costs by reducing redundant calls. For instance, a popular geocoding service charges around €50 for 50,000 requests, €300 for 100,000 requests, and €900 for 250,000 requests. By serving repeated addresses from the cache, you can handle larger datasets without inflating expenses. Additionally, setting daily quota caps in your API console can prevent unexpected costs from excessive usage. For batch processing, deduplicating your address list beforehand ensures each unique location is geocoded only once. Combining caching with dynamic queuing further optimizes rate limit management and keeps expenses in check.

4. Asynchronous Queuing and Pipeline Processing

Asynchronous queuing takes the concepts of throttling, batching, and backoff strategies to the next level, making large-scale geocoding more efficient.

This method separates the submission of requests from the retrieval of results, allowing high-volume processing without tying up the main thread. Instead of waiting for each geocoding response, you submit jobs to a queue. Later, you retrieve the results in two steps: first, send an HTTP POST to create a job, and then use HTTP GET polling to check the job status until it moves from "pending" to "completed".

Scalability

Pipeline processing breaks datasets into smaller batches - typically 1,000 addresses per batch - and processes them in controlled groups to stay within RPS (requests per second) limits. For example, with a free-tier plan capped at 5 RPS, you can process up to 3,000 addresses in about 10 minutes. A premium plan with a 30 RPS limit can handle 250,000 addresses in just over 2.3 hours. By using thread pools or parallel execution, you can run multiple requests simultaneously while keeping a central throttle to comply with API constraints. Together with throttling, caching, and backoff strategies, asynchronous queuing ensures efficient handling of rate limits.

Error Handling

Asynchronous pipelines excel in handling errors by allowing targeted retries for failed batches, so you don’t have to restart the entire process. For transient errors (4XX/5XX), applying exponential backoff with jitter and limiting retries to five attempts is a practical approach. To maintain accuracy, use unique identifiers (like an objectid) in your input JSON to map each output coordinate back to the original record. Google Maps Platform emphasizes this point:

Large numbers of synchronized requests to Google's APIs can look like a Distributed Denial of Service (DDoS) attack on Google's infrastructure, and be treated accordingly.

These error-handling strategies integrate seamlessly into the asynchronous queuing framework, ensuring smooth operation even when issues arise.

Implementation Complexity

Setting up asynchronous queuing involves some initial challenges. You’ll need message queues (e.g., Redis) and worker processes to manage tasks. Additionally, handling job states (e.g., Submitted, Running, Succeeded, Failed) and managing file uploads/downloads for CSV-based batching adds another layer of complexity. Specialized libraries can simplify rate limiting and batching, while saving results in Newline-Delimited JSON (NDJSON) format is ideal for streaming and efficient processing.

Cost Efficiency

Asynchronous queuing also helps keep costs in check. By spreading requests over time, you can stay within free-tier or low-cost RPS limits. Setting daily quota caps prevents unexpected billing surprises caused by runaway processes. Scheduling bulk geocoding jobs during off-peak hours minimizes competition with real-time user traffic. For added safety, configure your queue worker to operate below maximum throughput - e.g., 2,500 queries per minute instead of the full 3,000 QPM - to handle unexpected spikes without breaching limits. Batch geocoding can cut API call costs by up to 50% compared to processing individual requests.

Strategy Comparison

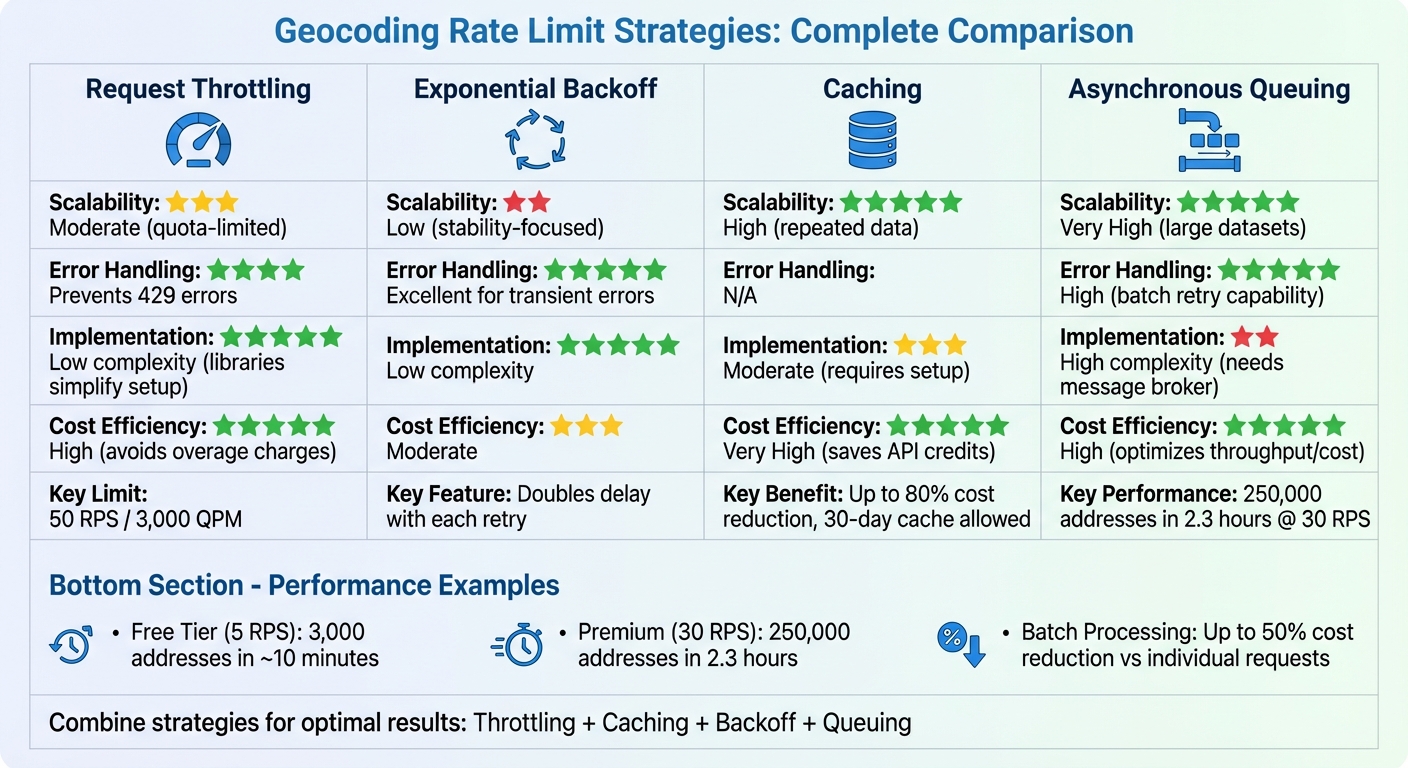

Batch Geocoding Rate Limit Strategies Comparison: Scalability, Error Handling, and Cost Efficiency

Let’s take a closer look at how throttling, backoff, caching, and queuing stack up against each other when handling large geocoding datasets. Each strategy has its own strengths and trade-offs, depending on your goals - whether it's speed, stability, cost savings, or scalability.

Request Throttling ensures compliance with API limits. For instance, the Google Maps Platform caps usage at 3,000 queries per minute (around 50 requests per second), while free-tier services might allow as few as 5 requests per second. Using libraries for rate-limiting keeps implementation straightforward, but manually throttling in JavaScript can quickly become cumbersome as datasets grow.

Exponential Backoff focuses on keeping your system stable during transient failures. It avoids overloading APIs by spacing out retries, as highlighted by Google Maps Platform:

"Poorly designed API clients can place more load than necessary on both the Internet and Google's servers... Following best practices can help you avoid your application being blocked".

While this method is excellent for error recovery, it doesn’t speed up processing - it simply ensures smoother handling of failures.

Caching is a cost-effective choice, particularly when dealing with frequently repeated addresses. By avoiding redundant API calls, caching can slash costs by up to 80% for recurring locations. Some providers even allow caching for up to 30 days. Setting up a caching layer (e.g., with Redis or a database) requires moderate effort but delivers significant savings in the long run.

Asynchronous Queuing shines in large-scale operations. It’s highly scalable, making it perfect for handling massive datasets. By batching requests, you can reduce costs per address compared to sending individual queries. As Sanborn explains:

"If you have, say, 1 million addresses to geocode, you cannot just blast them all at once without running into the QPM wall. Instead, you should design a pipeline that processes addresses in chunks with controlled throughput".

However, setting up asynchronous queuing can be complex, often requiring tools like message queues (e.g., Redis) and worker threads.

Here’s a quick comparison of these strategies:

| Strategy | Scalability | Error Handling | Implementation Complexity | Cost Efficiency |

|---|---|---|---|---|

| Request Throttling | Moderate (quota-limited) | Prevents 429 errors | Low (libraries simplify setup) | High (avoids overage charges) |

| Exponential Backoff | Low (stability-focused) | Excellent for transient errors | Low | Moderate |

| Caching | High (repeated data) | N/A | Moderate (requires setup) | Very High (saves API credits) |

| Asynchronous Queuing | Very High (large datasets) | High (batch retry capability) | High (needs message broker) | High (optimizes throughput/cost) |

Each of these strategies has a distinct role to play, and the best choice often depends on your specific workload and priorities. For example, if cost savings are critical, caching might be your go-to. On the other hand, asynchronous queuing is ideal for large-scale operations where scalability takes precedence.

Conclusion

To manage API rate limits effectively, combining various strategies is key. The right approach depends on your specific needs, but here’s a quick recap of the techniques discussed:

- Request throttling ensures you stay within the 50 requests per second limit, avoiding "429 Too Many Requests" errors.

- Exponential backoff provides a safety net for handling temporary failures.

- Caching minimizes redundant API calls for repeated queries.

- Asynchronous queuing streamlines the processing of large datasets in the background.

When used together, these methods create a robust system capable of handling both routine workloads and unexpected traffic surges.

Zip2Geo's pricing plans align well with these strategies. Here's a breakdown:

- The Free plan allows up to 200 requests per month, ideal for testing and small-scale use.

- The Starter plan (€5/month or €50/year) offers 2,000 requests, perfect for smaller projects.

- The Pro plan (€19/month or €190/year) supports 20,000 requests and includes priority support and an SLA guarantee.

- The Scale plan (€49/month or €490/year) provides 100,000 requests, dedicated support, and uptime monitoring for high-demand operations.

Begin with throttling to manage your request rate, add caching to cut down on repetitive queries, and use exponential backoff for added reliability. As your data needs grow, asynchronous queuing ensures efficient processing. By blending these techniques, developers can optimize geocoding workflows and maintain smooth API interactions.

FAQs

How do I choose the right batch size?

When choosing the best batch size for geocoding, it's crucial to account for the API's rate limits and the size of your dataset. Start by reviewing the API's specifications - look at the maximum number of locations allowed per request and the permitted number of requests per second or minute. Then, tailor your batch size to comply with these restrictions to avoid errors or throttling.

For instance, if the API supports up to 100 locations per batch and allows 3,000 requests per minute, you could process as many as 30,000 locations in sequence. Adjusting your batch size accordingly ensures smoother and more efficient processing.

Which errors should I retry, and which shouldn’t I?

When dealing with retry errors like 429 Too Many Requests or OVER_QUERY_LIMIT, it's smart to use strategies like exponential backoff. This means increasing the wait time between retries gradually, which helps avoid overwhelming the server.

However, if the error is due to exceeding IP or rate limits, don't retry right away. Immediate retries can worsen the issue and may even result in further restrictions. Instead, take a pause and allow some time to pass before trying again. This approach helps ensure you stay within the permitted limits and avoid additional violations.

How can I cache geocoding results without breaking API rules?

To manage geocoding results efficiently while staying within API rate limits, consider storing location data locally or in a database after the first request. Before making a new API call, check your cache to see if the data already exists. If it does, use that stored data. If not, fetch the information from the API and save it for future needs. This approach minimizes API calls, helps you stay within usage limits, and boosts overall efficiency.